Cursor

Configure Cursor IDE to use Large Language Models hosted by AI Enabler.

Compatibility NoticeAs of Cursor version 2.6.22, new client-side validation rules in the IDE reject our model names, preventing the use of serverless models like minimax-m2.7 or glm-5-fp8.

If you are using a current version of Cursor, the integration described below will not function at this time. We are monitoring the situation for a fix or workaround and will update this guide as soon as one becomes available.

This tutorial walks you through configuring Cursor to use an OpenAI-compatible API to access LLMs hosted by AI Enabler in Chat, inline edits, and Agent mode. You'll point Cursor at an AI Enabler base URL so that all built-in AI features run through Cast AI infrastructure.

Overview

By the end of this tutorial, you'll be able to:

- Use GLM 5 and MiniMax M2.7 through Cursor's native Chat and Agent modes

- Route all Cursor AI requests to AI Enabler

- Switch between available models directly from Cursor's model selector

This tutorial is intended for developers who have a Cursor Pro subscription or higher. The free tier does not allow configuring custom API keys.

NoteAI Enabler Base URL works with any OpenAI-compatible client. This tutorial covers Cursor, but the same AI Enabler base URL and API key work with OpenCode and other compatible tools.

NoteAI Enabler also offers Kimi K2.5. However, Cursor ships with its own Kimi K2.5 integration that overrides custom endpoints, so the model is not available through this setup.

Prerequisites

Before starting, ensure you have:

- A Cast AI API key — Generate one from the Cast AI console under AI Enabler > Overview

- Cursor IDE — Download from cursor.com

- A Cursor Pro subscription or higher

Step 1: Open Cursor Settings

Open Cursor and navigate to Cursor Settings:

- macOS: Menu bar > Cursor > Settings > Cursor Settings

- Windows/Linux: Menu bar > File > Settings > Cursor Settings

In the left sidebar, click Models.

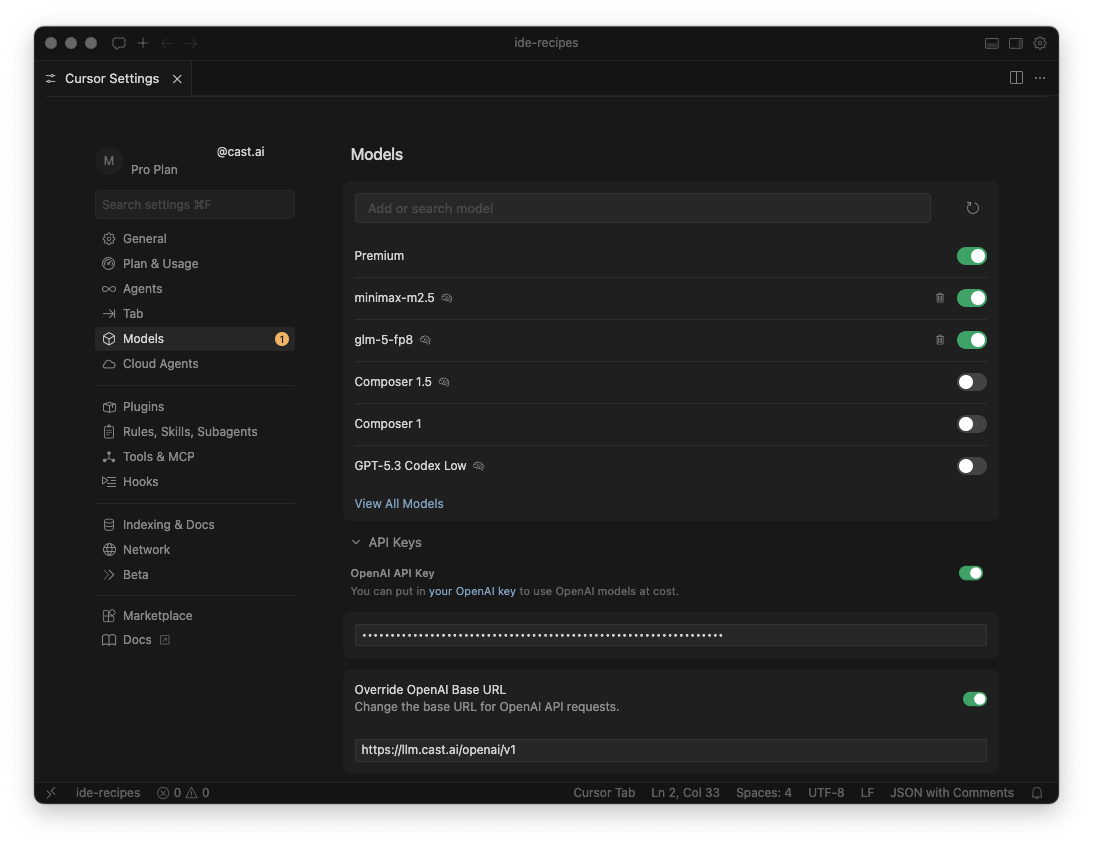

Step 2: Configure the OpenAI base URL

In the Models settings page, scroll down to API Keys and configure the following:

-

Enter

https://llm.cast.ai/openai/v1in the Override OpenAI Base URL field, then toggle it ON. -

Enter your Cast AI API key in the OpenAI API Key field, then toggle it ON.

This tells Cursor to route all OpenAI-compatible requests through AI Enabler instead of OpenAI's default endpoint.

Step 3: Add models

In the same Models page, use the Add or search model field at the top to add the following models:

glm-5-fp8minimax-m2.7- Toggle each model ON after adding it.

Your settings should look like this:

Cursor Settings — Models page with minimax-m2.7 and glm-5-fp8 enabled, API key and Override OpenAI Base URL configured

Step 4: Verify your configuration

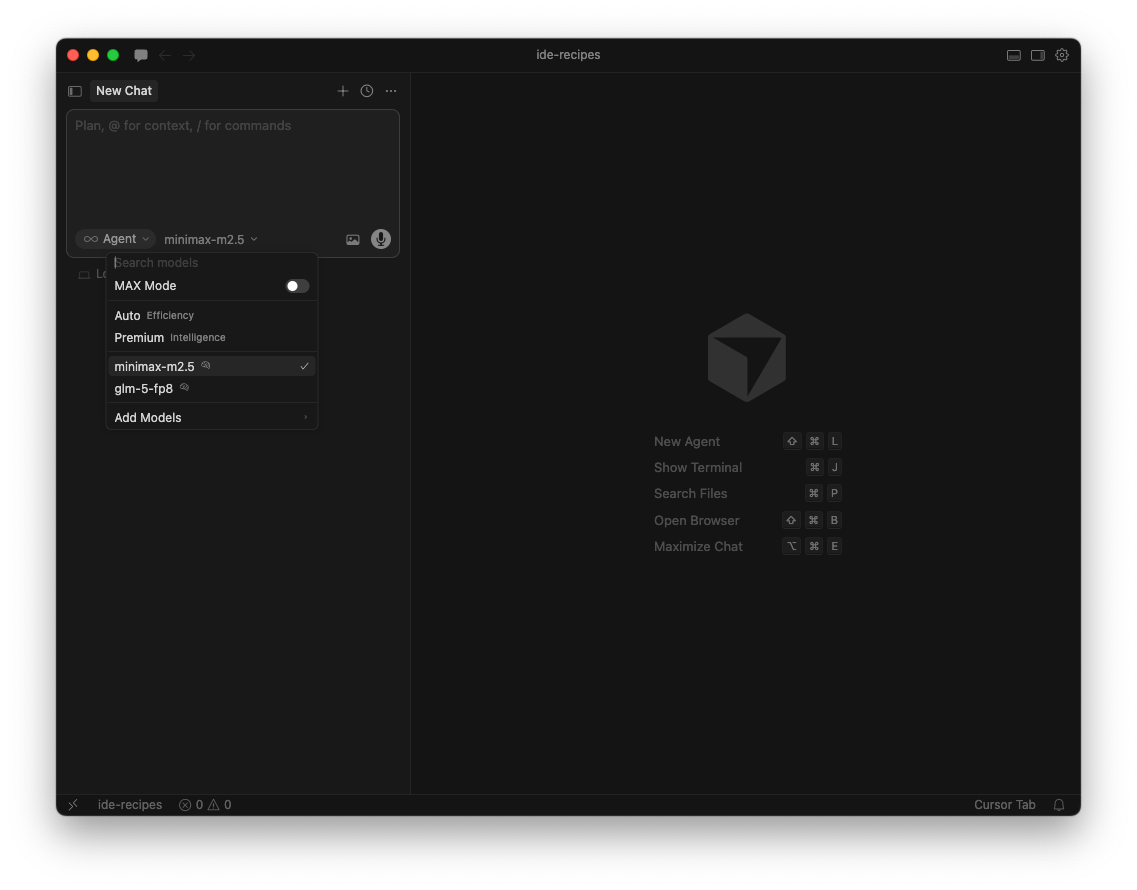

-

Open Cursor Chat.

-

Click the model selector at the bottom of the chat input panel and switch from Auto to minimax-m2.7.

The model selector should show

minimax-m2.7andglm-5-fp8as available options:

Cursor Chat — model selector showing minimax-m2.7 selected

Send a test prompt — for example, Hello. If you get a response, the connection is working.

Next steps

Updated 20 days ago